Better Robots.txt Pro is the ultimate WordPress plugin that revolutionizes your website’s SEO by optimizing your robots.txt file. Crafted from thousands of SEO analyses, this plugin is engineered to skyrocket your site’s rankings and address the chronic issue of content indexation found in over 95% of WordPress websites.

Why Choose Better Robots.txt Pro for Your WordPress Robots.txt Optimization?

The robots.txt file is a goldmine for SEO, and Better Robots.txt Pro is the key to unlocking its full potential. Here’s why it’s a game-changer:

- Effortless Creation: Generate a virtual robots.txt file for your WordPress site with just a few clicks.

- Customization at Your Fingertips: Tailor your WordPress robots.txt content with custom rules and instructions for popular web robots, including a Crawl-Delay feature.

- Sitemap Integration: Automatically detects your sitemap URL (generated from Yoast SEO or any other sitemap generator) and seamlessly integrates it into your robots.txt file.

- Shield Against Bad Bots: Safeguard your site by blocking malicious bots known for scraping large volumes of data.

- Multilingual Support: Available in 7 languages including English, Chinese, French, Russian, Portuguese, Spanish, and German.

- Boost WooCommerce Performance: Elevate your online store by blocking irrelevant WooCommerce links.

- Prevent Crawler Traps: Avoid crawler traps that generate crawl-budget issues.

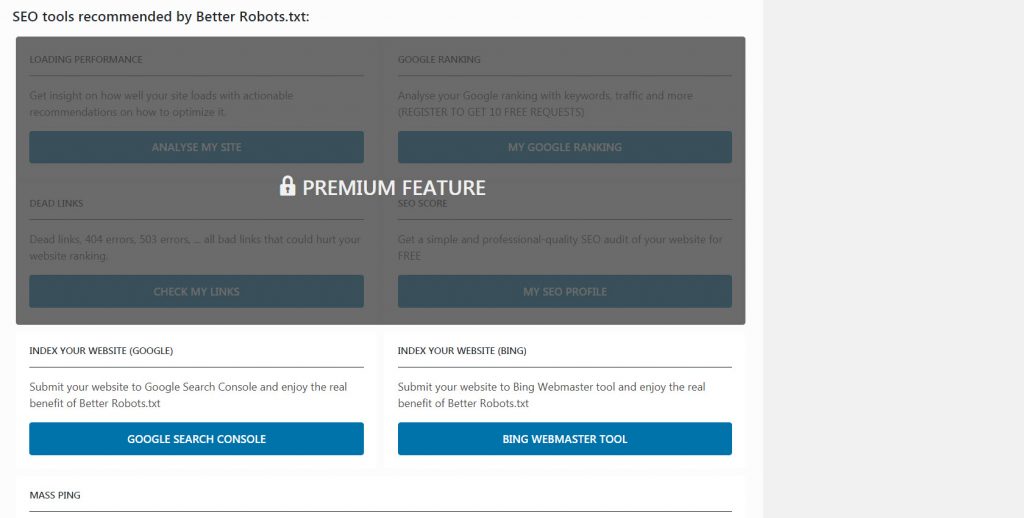

- Access to Growth Hacking Tools: Gain access to over 150+ growth hacking tools.

How to optimize Better Robotx.txt and your Robots.tx file?

Empower Your SEO Strategy with Better Robots.txt Pro

Better Robots.txt Pro is not just about creating a robots.txt file; it’s about embracing an underutilized aspect of your website to enhance your SEO. By optimizing your WordPress robots.txt file, you’re ensuring that search engine crawlers effectively index your site, which is crucial for higher search engine rankings.